No Unicorns

Applying AI to mathematics without a detour through Silicon Valley

[Grigori] Perelman’s aversion to public spectacle and to riches1 is mystifying to many. … I want to say I have complete empathy and admiration for his inner strength and clarity, to be able to know and hold true to himself. Our true needs are deeper–yet in our modern society most of us reflexively and relentlessly pursue wealth, consumer goods and admiration. We have learned from Perelman’s mathematics. Perhaps we should also pause to reflect on ourselves and learn from Perelman’s attitude toward life.

(William Thurston, quoted in Mathematics without Apologies, Chapter 6)

[I got a bit carried away while preparing this post. Impatient readers are encouraged to skip ahead to the section under the title “No Unicorns” for some encouraging news about applications of AI in mathematics.]

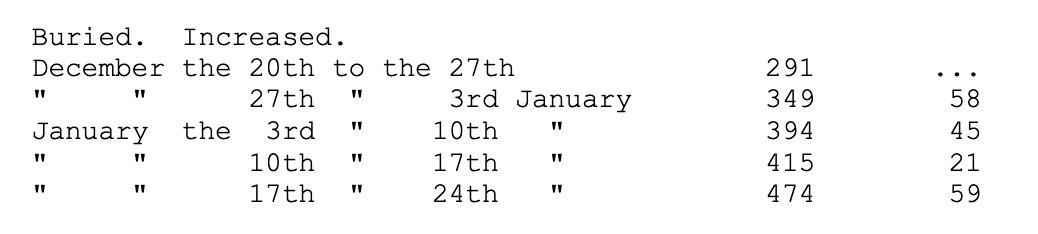

When the pandemic confined most of us to our homes most of the time in early 2020, and no one knew how or when we would be released, I kept my spirits up, as I’m sure many of you also did, by reading accounts of the Plague. I reread The Plague by Camus, which I had last read in high school, about a fictional plague in Oran, as well as Defoe’s A Journal of the Plague Year and chapters 31-33 of Manzoni’s I Promessi Sposi, about the very real plagues in London in 1665 and in Milan in 1630, respectively. Defoe’s fictionalized account — written from the standpoint of a narrator who is witnessing the progress of the disease, although Defoe himself was only 5 years old at the time — includes tables of “bills of mortality” from the early weeks of the plague year.

The plague starts slowly in Camus’ novel as well: there’s a dead rat on p. 9 of my copy, then three more dead rats on the following page; humans start dropping dead a few pages later, and by the beginning of Chapter II Oran is under quarantine.

When I am confronted with an unfamiliar but significant phenomenon of any kind, my mind spontaneously reaches for analogies, the wilder the better, in order to render the experience more comprehensible. If I were a zombie — which I follow Jaron Lanier in defining to be an information-processing unit trapped in human form — my native language would be geekspeak and I would refer to such analogies as hallucinations dredged up from the depths of my portable though inconceivably vast database, since as a zombie I would be a kind of ambulatory LLM that runs on Pringles and instant ramen instead of small modular (nuclear) reactors.

But I am not a zombie, so when reports of the accelerating movement of mathematicians in the direction of the AI industry began to filter in with alarming frequency, my (organic and spontaneous) tendency to free association, colored by the residual anxiety left over from the recent pandemic, led me to observe that this movement is following a very similar progression to that recorded in Defoe’s bills of mortality. Now, I would never dream of speaking of Silicon Valley and the bubonic plague in the same sentence. My point is only that my mental picture of how the tech industry is picking off mathematicians a few at a time takes the form of a map of the globe — where little green lights, representing mathematicians, go dark as they migrate to the dark side — and this resembles my image of 17th century London, where 100,000 lights went dark in the space of a year, or my analogous mental maps of contemporaneous Milan or mid 20th century Oran. Or, closer to home, the interactive maps published in the spring of 2020 of Covid cases in New York, when, to avoid close contact with strangers and possible contamination, I literally crossed the street repeatedly on my rare ventures to the outside world.

I have been planning for some time to write about AI projects in mathematics that are not carried out in collaboration with DeepMind, nor make any use of LLMs, but that are as successful as any that have been widely publicized by the industry, if not more so. The last part of this post will be devoted to a few such projects, but I have been sidetracked by hearing one report after another of colleagues who have chosen to swim in the troubled waters of a “San Francisco Bay of the Mind” (this is an allusion to the title of a book by the founder of City Lights Bookstore,2 an institution that has survived the onslaughts of e-commerce, in large part thanks to its well-stocked section addressed to those disillusioned by the tech industry. Every independent bookstore I visited in San Francisco last year has such a section, in fact! But to return to my digression), I have noticed with dismay that these colleagues, without exception, are all showing signs in their choice of vocabulary — le brutte e terribili marche della pestilenza, to quote Manzoni — of a cognitive deformation that is all too clear a symptom of excessive time spent in the Valley’s thinking pods.

Signs that your friend is in danger of becoming a zombie

Help should be sought immediately if any of the following are observed:

Using the word “compute” as a noun, specifically a mass noun.

Referring to one’s own cognitive activities in the language of computer programming (geekspeak), such as “search” or “high-dimensional” or “benchmark.”

Referring to one’s professional activities in the language of neoclassical economics (e.g. “marginal cost” or “opportunity cost”).

Redirecting conversations about understanding or consciousness or intentionality to focus on measurable behavior

Failing to recognize irony.3

Why I may be at risk

Are you at risk? I certainly feel I have to be extra careful these days. Just the other day I received an invitation to nominate “companies that drive ongoing market growth” for the Pinnacle Artificial Intelligence Awards.

Is this just one of a proliferating wave of dodgy schemes to exploit the current state of AI hype by extracting multiple $275 nomination fees from gullible readers of AI promotional materials? The “About Us” page on the Pinnacle Awards website provides no information whatsoever about the people behind the awards; all I learned upon consulting the page was that

Winners of the Pinnacle Awards are awarded a digital ribbon.

Why I may be safe after all

The AI labs have taken the world’s intellectual output without compensation, transformed it and made it valuable for their customers. What happens to the companies and jobs they displace is left for “the market” to magically come up with on its own.

(Tim O’Reilly, Financial Times, January 26, 2026)

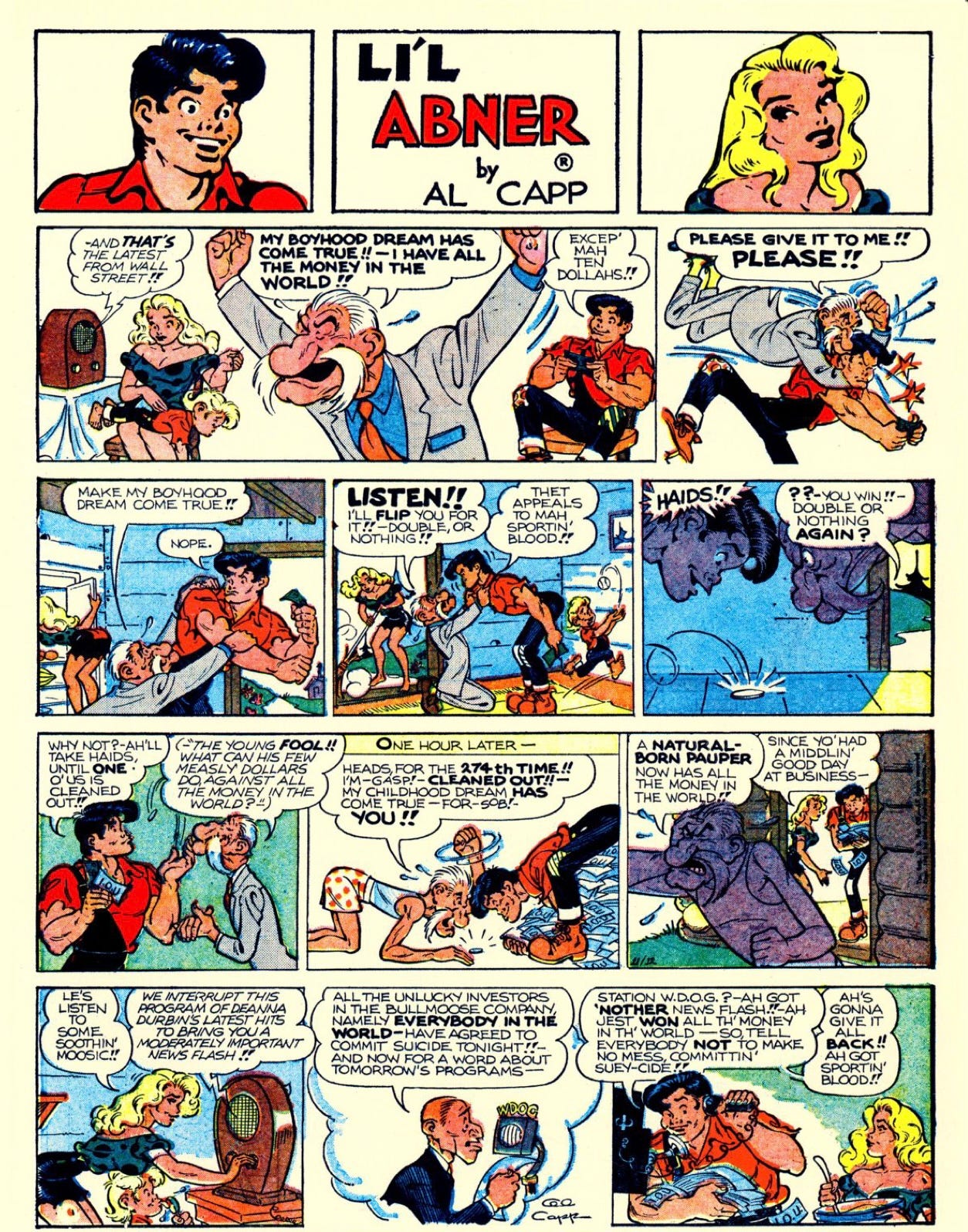

Not to brag, but I already understood this at age 7, thanks to Al Capp’s story of how Li’l Abner foiled General Bullmoose’s “boyhood dream” to acquire “all the money in the world.”

I was reminded of this, the concluding episode in a series that ran on successive weeks in the Philadelphia Sunday Bulletin, by one of those wild analogies to which I referred above, when I understood that Silicon Valley’s ambition was to extract a rent from every aspect of human experience (transportation, traveling, reading the news, listening to music, friendship…). And to give honor where it is due, although I immediately began to search for this comic strip to confirm my vivid memory that Abner was still holding on to ten dollars and that General Bullmoose’s underwear had red polka dots, it wasn’t until LLMs reached their current level of performance that I was able to locate the episode in an online repository of pirated newspaper comics and date the memory to 1961, thus establishing a fruitful and ongoing dialogue with my long-lost 7-year-old self.

It has been many years since the funny papers regularly distilled the wisdom of Marx and Engels4 to the level of the Weekly Reader, but it is still possible, even for adults, to acquire immunity to the industry epidemic by engaging in a practice that is already familiar to mathematicians: responding to a question not with an answer but by questioning the question’s presuppositions. I illustrate with a moment from a long recent exchange between my former Columbia colleague Daniel Litt and two representatives of Epoch AI. Here is the question:

Anson: So compute is really important. Then why aren’t all the mathematicians trying to go into a big lab and work with OpenAI or DeepMind where there’s a huge amount of compute?

Daniel: Model capabilities are not really there yet.…

Here are some possible responses that do not require accepting the question’s premises:

(Easiest example) Because some of “the mathematicians” have read Karen Hao’s book about OpenAI or any of a number of books about Google (for example, this one) and would commit hara-kiri rather than risk being found in an online searchable database alongside any of these characters.

Because some of “the mathematicians” are convinced by some version of the argument of Walter Dean and Alberto Naibo that even the hugest “amount of compute” is irrelevant to the problems that interest them.

Because (referring to the sentences that immediately preceded the passage quoted) search for “examples of cool stuff” is not the only or even a primary driver of mathematical research.

Because even “the mathematicians” to whom none of the previous responses apply and who do want to experiment with AI can do so while maintaining a prophylactic separation from the industry’s blandishments.

It is to a particularly instructive example of this last point that I now turn.

No Unicorns

Today’s title was inspired5 by Jafar Panahi’s film No Bears (2022). The film’s title refers to the belief, on the part of some but not all residents of a village in Iran’s Azerbaijan province, that a certain road must be avoided at night because of the danger of bear attacks. This belief in turn serves as a metaphor for the — possibly justified — beliefs that paralyze characters who represent Iran’s urban population.

The — possibly justified — beliefs that appear to be paralyzing those of my colleagues who are not yet zombies are based on the widespread impression that interesting applications of AI in mathematical research can only be obtained in collaboration with trillion-dollar corporations that are investing in automated theorem proving at a rate that would allow them to fund the entire mathematical research enterprise in the US several times over. Even Axiom Math,6 for example, one of the smaller players in this sandbox, is valued at $300 million, which could fund 100 mathematicians for 10 years at the extremely generous annual salary of $300K.

Exposure to evidence may help to cure this paralysis. Last September, my former colleague Alberto Mínguez, now of the Universidad de Sevilla, together with Thomas Lanard of the Laboratoire de Mathématiques de Versailles, posted on arXiv a paper entitled An algorithm for Aubert-Zelevinsky duality à la Mœglin-Waldspurger. As the abstract explains, it

was discovered with the help of machine learning tools, guiding us toward patterns that led to its formulation.

Aubert-Zelevinsky duality is a structure in the representation theory of p-adic groups that was first identified by Andrei Zelevinsky and generalized by (my former colleague) Anne-Marie Aubert. The goal of the Lanard-Mínguez project was to give an explicit expression for Aubert’s abstract and formal construction in terms of Langlands parameters. The authors

used machine learning, specifically supervised learning, to develop intuitions and formulate conjectures

No unicorns were needed to develop a neural network for this purpose; rather, as Mínguez explained in a private message, the project was carried out on a laptop:

the code was written in Python using [the open source library] PyTorch. All the machine learning aspects are already implemented in PyTorch.… Once the database has been created, fewer than 100 lines of code are needed to construct and train a neural network.

This sort of thing seems increasingly common. The Lanard-Mínguez article is one of

100 awesome papers exploring the use of artificial intelligence / machine learning / deep learning for mathematical discoveries

on a list curated by Seewoo Lee; the graph accompanying the list shows that the majority of the papers that qualify as “awesome” are based on open source models. Although I don’t have the patience to go through all 100 papers, my inspection indicates that fewer than half involved collaboration with the industry. At a workshop at Columbia last week, Tristan Buckmaster explained that his most recent joint work on identifying self-similar unstable singularities in solutions to equations in fluid dynamics — the subject of an unusually informative article in Quanta (with AI in the title), as well as my account in this newsletter — made no use of resources not available at his university.

“What can [our] few measly dollars do against all the money in the world?” Last week markets were “fraught with dual worries that artificial-intelligence giants are overvalued and that their products could upend entire industries.”

Silicon Valley’s allure has created a giant sucking sound of money funneling into the U.S. stock market.…Investors are souring on some speculative bets on unprofitable tech companies, while individual firms’ earnings reports or data-center construction updates spark price swings across the sector.…Worries that AI could disrupt software companies sparked a selloff that spread into chip shares and other companies linked to the infrastructure build-out for AI. Investors instead funneled money into real-economy firms like energy producers and consumer brands.

(David Uberti, Wall Street Journal, February 6, 2026)

It is probably too late to hope that the zombie epidemic can be avoided altogether, but perhaps the evidence that the projected trillion dollar infrastructure investments are likely to leave research mathematics indifferent will slow its progress, and keep a few of those green lights from turning dark.

Grigori Perelman is the Russian mathematician who solved the Poincaré Conjecture, as well as Thurston’s geometrization conjecture, following a strategy first suggested by Richard Hamilton. He then refused both the Fields Medal in 2006 and the million-dollar Clay Millenium Prize, and subsequently quit mathematics altogether, sparking speculation that he was somehow abnormal. Thurston was speaking on the occasion of the official ceremony in Paris honoring Perelman — in his absence — for his achievement.

who notably wrote that he is

perpetually awaiting

a rebirth of wonder

which is something a zombie would never write.

I had a depressing encounter on January 30 with the anonymous bot that wrote my Grokipedia page and grossly misrepresented my position on diversity statements and DEI, attributing to me positions that could have come verbatim from Grok’s chieftain himself.

For example:

the bourgeoisie cannot exist without constantly revolutionising the instruments of production, and thereby the relations of production, and with them the whole relations of society.

…by that spontaneous experience of absurd free association to which I alluded above…

In an astonishing example of synchronicity, arXiv on February 6 posted two preprints by the Axiom Math team, claiming fully formalized and fully automatic proofs of new results in mathematics (here’s one), and also posted the first release of a new project called First Proof, which describes its stated objective as follows:

To assess the ability of current AI systems to correctly answer research-level mathematics questions, we share a set of ten math questions which have arisen naturally in the research process of the authors. The questions had not been shared publicly until now; the answers are known to the authors of the questions but will remain encrypted for a short time.

Readers are invited to read these side by side, along with Siobhan Roberts’s New York Times article on First Proof, and draw their own conclusions.

Indeed, I can't see any unicorns here https://openscilm.allen.ai/ (arxiv paper here https://arxiv.org/abs/2411.14199 , and two recent (last week) articles in Nature here https://www.nature.com/articles/s41586-025-10072-4 and there https://www.nature.com/articles/d41586-026-00347-9 ). Just about literature reviewing, though.

Hey M - you may enjoy this piece on how post-structuralism applies to LLM's by my friend David Berreby

https://robots4therestofus.substack.com/p/no-thoughts-no-desires-yet-it-speaks