When the mathematicians of the future consult our digital helper, which face will we want to see?

On Monday April 22, the Boston Center for the Philosophy and History of Science hosted a one-day conference with the title “Mathematics with a human face,” as part of a four-year project with the same name, based at the University of Bergen in Norway. Juliet Floyd at Boston University was the main local organizer, and I believe she was primarily responsible for the conference abstract:

How important are creativity and the human element to mathematics? In an age of AI and progress in automating proofs, these question arise. To ask philosophically, we need to include a characterization of actual mathematical practice, and not exclude the cultural, linguistic, pedagogical, computational and conceptual development of mathematics within wider historical and social contexts. The question of the human as mathematician is emblematic forour time, when larger philosophical and cultural questions about the automation of human labor become increasingly central. Is human bias something to be celebrated or eradicated? What is the relation of mathematics to cultural concerns and values? Can we learn from confronting its history in terms of the present?

In 1947, in his Lecture to the London Mathematical Society, Alan Turing raised the question explicitly:

The Masters [i.e., mathematicians] are liable to get replaced because as soon as any technique becomes at all stereotyped it becomes possible to devise a system of instruction tables which will enable the electronic computer to do it for itself. It may happen however that the masters will refuse to do this. They may be unwilling to let their jobs be stolen from them in this way. In that case they would surround the whole of their work with mystery and make excuses, couched in well-chosen gibberish, whenever any dangerous suggestions were made. I think that a reaction of this kind is a very real danger. There are many philosophical questions embedded in Turing’s remark, which was not simply a throwaway, but a prescient observation about what he elsewhere called “The Cultural Search”, which he believed would become increasingly important over time, and include “the human community as a whole” (1948, “Intelligent Machinery”). In this one-day event philosophers, mathematicians, logicians, historians and computer scientists will take stock of the issues.

Meanwhile, pictures of my university campus were being published in the international media and the university’s President was summoned to Washington, D.C., for a disastrous hearing. The hearing’s title — “Columbia in Crisis” — is a classic (and probably not inadvertent) illustration of what the philosopher J.L. Austin called a performative utterance: Congress uttered the title and lo, Columbia was in a full-blown crisis: arrests, evictions, numerous statements and petitions, including public calls from within the university for censure of President Baroness Nemat (Minouche) Shafik, and from outside 1400 academics called for a boycott of the university until certain conditions are met, including the President’s resignation.

I was on the road for nearly a week and missed all that, but the crisis continues, and is likely to continue indefinitely, at Columbia and on many other campuses around the country, without serious concessions on the part of the university — and its donors. Neither looks likely to give in, and this should serve as yet another reminder of what it means to give control of the material preconditions of your researchers to rich people who have their own agendas.

But to return to the conference: the first talks were about cultural, philosophical, and sociological aspects of mathematics, mostly by people with whom I have been in regular contact for years, and they were inspirational. There is a small but growing community of mathematicians, historians, and philosophers (at least) who are committed to seeking insight about the nature of our vocation in whatever is ignored by the Central Dogma. All it needs is a memorable name in order to leave its mark on the history of history of mathematics.

The final session was originally intended to be an exchange between David Mumford and me on the future relations between mathematics and AI, moderated by BU Computer Science Professor Assaf Kfoury. Mumford sent me the slides of his presentation well in advance, and I was planning to comment more directly on their contents, but the events of the “Crisis” created a huge distraction. What follows is a version of the text of my remarks, with apologies for recycling material that has already appeared here. I hope recordings of all the talks will be made public.

Whose human face?

David Mumford’s presentation hints that mathematics may be at a turning point, because of AI, and mentions in passing an earlier turning point when pure and applied mathematicians went their separate ways.

It’s always difficult to think beyond the epistemic framework of one’s own training, which in my case took place entirely after that turning point.

David Mumford was a major influence in shaping my generation’s ideas, and my own ideas, about mathematics! He was a principal actor in a localized turning point within his field and my field – around Grothendieck – not only through his own research but also in creating some of the most effective materials for training in the new methods.1

Can this happen again with AI?

Is the introduction of mechanical methods analogous to the introduction of representable functors in algebraic geometry?

Is the history of mathematics necessarily a human history?

Is the last question an ethical one or is it purely technical? Is mathematics an expression of freedom, as Georg Cantor said, or is it governed by the iron laws of technological progress?

Human bias

When I read lines like

Is human bias something to be celebrated or eradicated?

from the presentation of today’s event, I inevitably think of the Dialectic of Enlightenment by Adorno and Horkheimer (written shortly before the radical separation of pure and applied mathematics) with quotations like this one:

enlightenment ... equates thought with mathematics. Thought is reified as an autonomous automatic process aping the machine it has itself produced so that it can finally be replaced by the machine... mathematics made thought into a thing, a tool, to use its own terms.

So for (certain) critical philosophers. mathematics is already a machine that threatens to eradicate human bias in the form of thought.

Mechanical slaves (two views)

..all those who have to know something about the soul ... bear witness to the fact that it has been ruined by mathematics and that in mathematics is the source of a wicked intellect that, while making man the lord of the earth, also makes him the slave of the machine.

(Musil, The Man without Qualities, chapter 11)

On the other hand, here is Hao Wang (Towards Mechanical Mathematics, 1960)

The superiority of machines in this respect indicates that machines, while following the broad outline of paths drawn up by man, might yield surprising new results .... We are in fact faced with a challenge to devise methods of buying originality with plodding, now that we are in possession of slaves which are such persistent plodders.

So…

Who is to be the master?

Since the industrial revolution the ideology of progress has constantly been accompanied by critical voices.

In a Technopoly, we tend to believe that only through the autonomy of techniques (and machinery) can we achieve our goals. This idea is all the more dangerous because no one can reasonably object to the rational use of techniques to achieve human purposes. ... The question ... is and always has been, Who is to be the master? Will we control it, or will it control us? The argument, in short, is not with technique. The argument is ... with techniques that become sanctified and rule out the possibilities of other ones. Technique... tends to ... become[] autonomous, in the manner of a robot that no longer obeys its master.

(Neil Postman, Technopoly (1993) Chapter 8)

(I couldn't resist… but they are not related)

The machine had an anti-social bias: it tended by reason of its ”progressive” character to the more naked forms of human exploitation... [It] was displacing every other source of value partly because the machine was by its nature the most progressive element in the new economy .... Life was judged by the extent to which it ministered to progress, progress was not judged by the extent to which it ministered to Iife.

(Lewis Mumford, Technics and Civilization (1934) Chapters III.8 and IV.9).

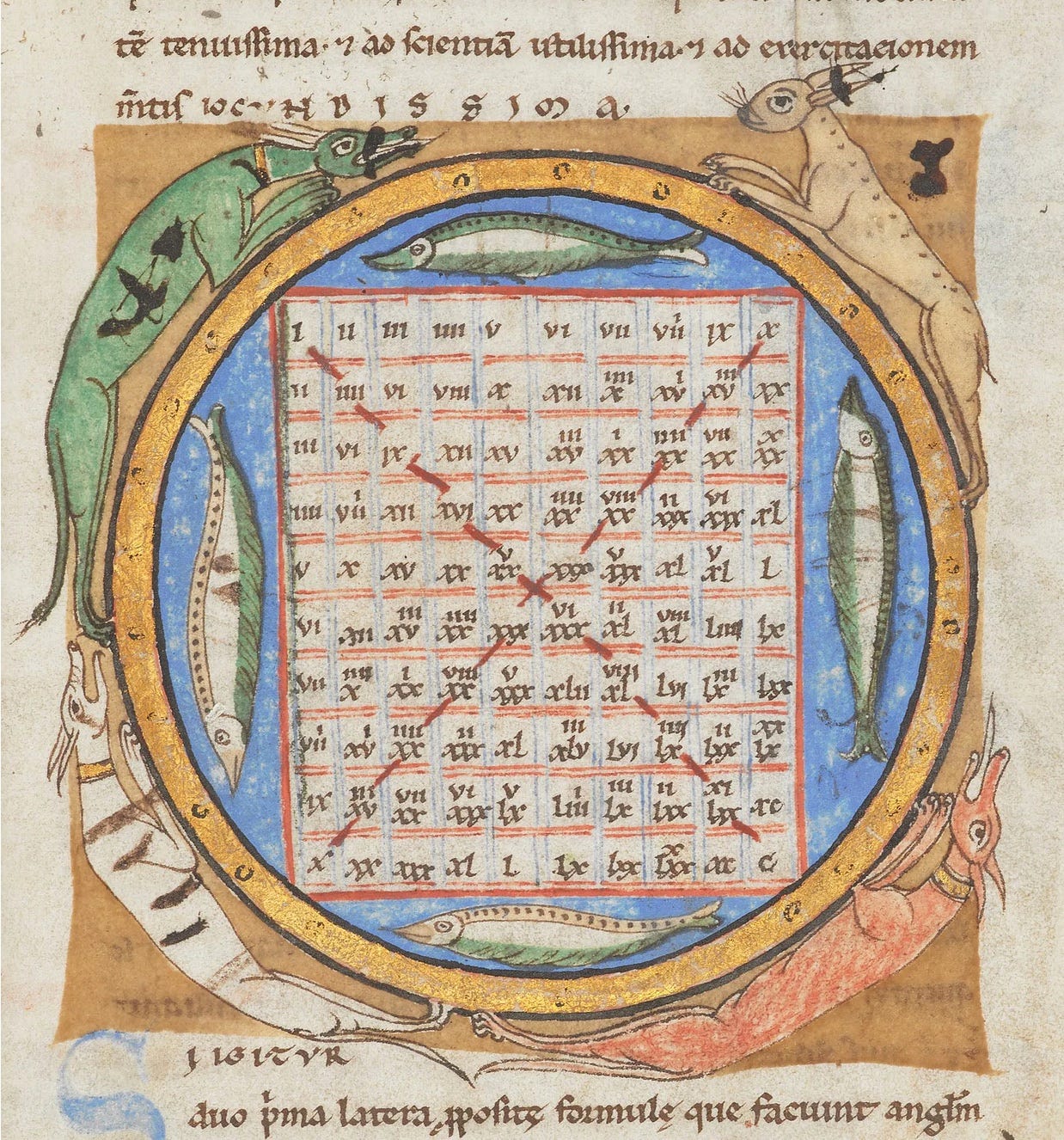

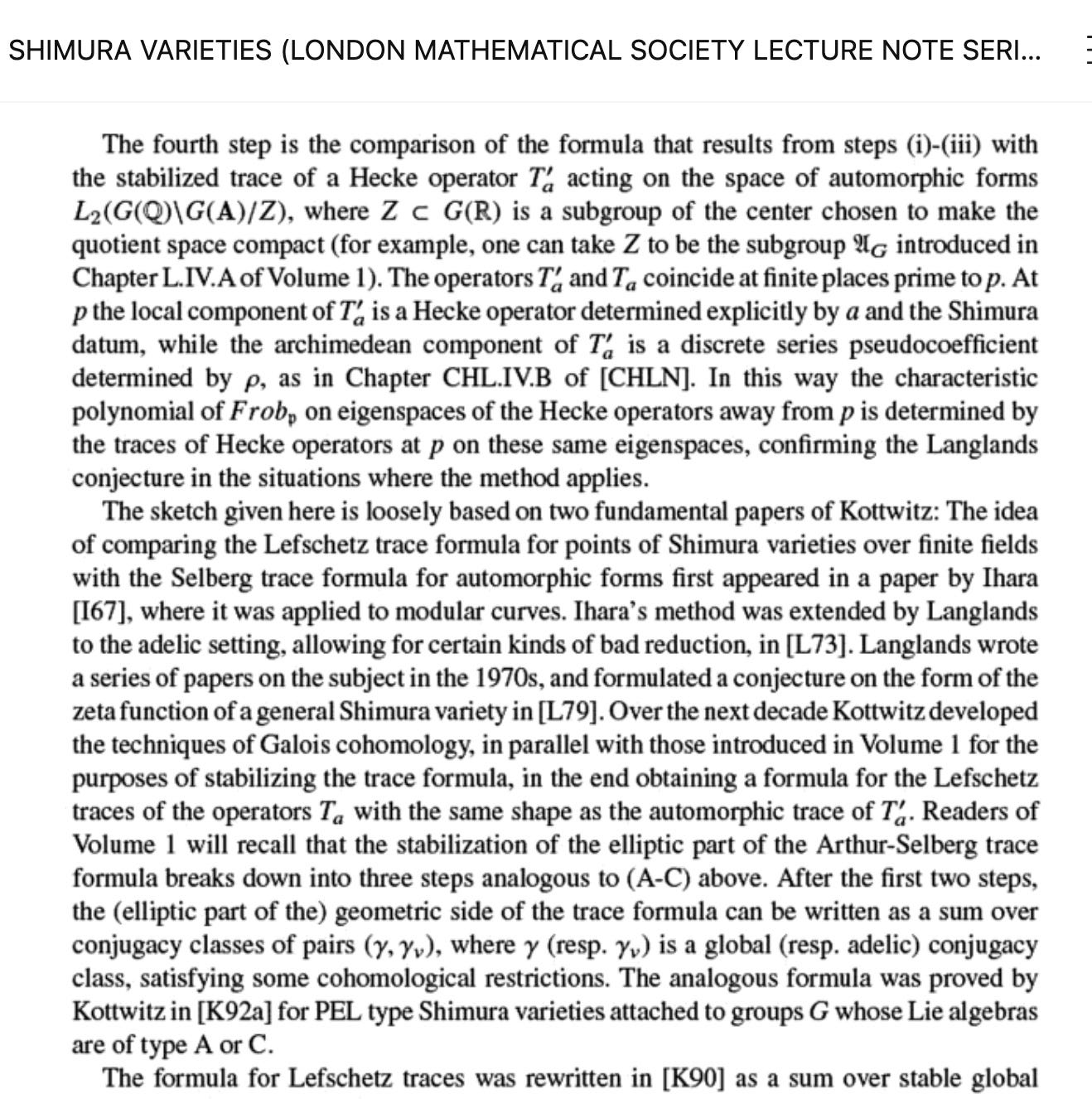

What mechanization looks (and feels) like, in two pictures

This is (one) effect of the mechanization of the production of mathematical texts: fish are no longer welcome as illustration.

There are powerful critical voices within the industry

... we do not agree that our role is to adjust to the priorities of a few privileged individuals and what they decide to build and proliferate. We should be building machines that work for us, instead of “adapting” society to be machine readable and writable. ... The actions and choices of corporations must be shaped by regulation which protects the rights and interests of people.

(Response by the authors of the Stochastic Parrots article to the March 2023 Future of Life Institute letter.)

I find it shocking that mathematicians talk and write about AI without attending to these voices.

The suffering machine

As part of National Pain Week (24–30 July 2023), UQ’s Professor Brian Key and Professor Deborah Brown... consider whether advanced AI could one day feel pain like humans, since artificial neural networks are increasingly designed to reflect human neural networks.

(from Ben Lerner, “The Pain Artist,” NY Review of Books)

Would such a development favor the project of mechanization of mathematics?

Optimism

David (Mumford) writes that the mathematical robots “will have agency and it will be hard not to think they have awareness, esp. if people become “fond” of them.” But also that

They will want whatever their programmers put in their code.

The implicit assumption is that an AGI will passively accept its role as a collaborator, or “slave,” as Hao Wang put it.

Question: Is the ability to choose priorities a prerequisite for mathematical creativity, and therefore might an AI capable of mathematical creativity reply to its programmer, like Melville’s Bartleby, “I prefer not to”?

Scrutinizing the motives

Any time you see a mix of authors who are employed by a company and authors who work at a university, you should really scrutinize the motives of the company for contributing to that work... We used to look at people employed in academia to be neutral scholars, motivated only by the pursuit of truth and the interest of society.

(“Silicon Valley is pricing academics out of AI research?”, Washington Post, quoting former Meta research manager David Harris, March 11, 2024)

This sort of observation appears in the press with some regularity, which is hardly surprising, given the history of corporate R & D. I find it shocking, again, that mathematicians don’t take these considerations seriously.

Giving sociopaths power over our lives and institutions

Right now, there are only a handful of companies with the resources needed to create these large-scale AI models and deploy them at scale. And we need to recognize that this is giving them inordinate power over our lives and institutions.

( Meredith Whittaker, in an interview with CNBC, November 2023)

To dig down into OpenAI history, the initial phase of OpenAI saw massive support from industry tycoons, including notable contributions from Elon Musk and Peter Thiel.

Their shared dream was to ensure AI’s immense potential didn’t end up concentrated in a few hands.As the landscape evolved, Musk’s AI ambitions were overshadowed, leading the visionary to make the strategic decision to re- sign from the board in 2018...

(From Technopedia, March 2024.)

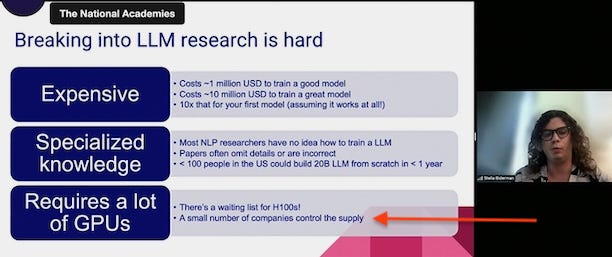

Only the industry has enough computing power for training

David refers to Google and OpenAI proprietary technology.

Even if you ignore the lessons of the OpenAI board uprising, what will an AI turning point look like under the aegis of the industry?

The dilution danger

As A.I. capabilities ramped up in 2022, I wrote on the risk of culture’s becoming so inundated with A.I. creations that when future A.I.s are trained, the previous A.I. output will leak into the training set, leading to a future of copies of copies of copies, as content became ever more stereotyped and predictable.

(Erik Hoel, “A.I.-Generated Garbage Is Polluting Our Culture,” NY Times, March 29, 2024)

Conjecture 3: The current rate-limiting step for AI mathematics is: producing orders of magnitude more lines of (high-quality) formalized mathematics.

(Alex Kontorovich at the June 2023 NASEM workshop.)

An earlier speaker at the workshop estimated that the existing corpus of formalized mathematics would have to be increased, in fact, by five orders of magnitude. The proposed solution is to use AI to generate this formalized mathematics.

So human mathematics would make up 1/100000 of the training set.

What could go wrong?

Is humanity due for an upgrade?

Eradicating human bias is only one aspect of the long-term project of the transhumanist wing of the sociopaths just encountered.

Even those who are not transhumanists aspire to cure humanity and replace it with something less flawed, in the spirit of We by Yevgeny Zamyatin:

No more delirium, no absurd metaphors, no feelings – only facts. ... I cannot help smiling; a splinter has been taken out of my head, and I feel so light, so empty!

And just yesterday (April 21) Gary Marcus (normally a humanist) posted this on his own newsletter:

...we don’t want AGI to lie or suffer from psychiatric disorders...

Are we so sure?

Are humans defective?

No one stops to think that perhaps the flaws are inseparable from the objectives. Perhaps, as I wrote on my Substack:

A self-driving car will only attain “human-level” performance if it is also capable of road rage; an artificial nurse capable of caring for babies will also be liable to kill them maliciously; and a “human-level” mathematical machine will be subject not only to all 265 entries in the DSM table of contents but also ... the minor disorders familiar to every mathematician’s life partner: those as innocent as withdrawing without warning from a conversation to stare blankly into space, those as exasperating as the wishful thinking that motivates persistence in a research direction that has already proved futile, those as heartbreaking as the suicidal despair that ensues when the wishful thinking must finally be abandoned.

Wenn du nicht irrst, kommst du nicht zu Verstand. (Mephistopheles, Faust II, 7847)

Lefschetz never stated a false theorem and never wrote a correct proof. (Philip Griffiths)

Should the “human bias” of Lefschetz have been eradicated?

A similar question could be posed of the Italian school of algebraic geometry.

Errors are an integral part of “doing” mathematics

Lakatos’s book Proofs and Refutations argues, with the example of Euler’s formula for polyhedra, that erroneous proofs are an inevitable stage in the development of theorems.

Requiring all proofs to be accompanied by machine-readable formal versions makes this stage impossible.

Poincare ́’s errors were particularly fruitful; the error in his prize monograph on the three body problem was the starting point for chaos theory.

Where would mathematics be if these Simil-proofs (after Silvia de Toffoli) had not been published?

As a student my training in algebraic geometry consisted in reading four of his books cover to cover; at least two of them are still required reading, along with much of what I read during the course of my career.

What I think AI will do is open up mathematics to so much more people, because suddenly things will become easy that were hard to do, allowing you to focus on ideas rather than technical details, while at the same time preserving the technical details down to the lowest level, because ultimately, without the details, there is no mathematics. Of course the space in which to express these ideas must allow for errors. I think what it will do is bring together pure and applied mathematics again. I set out to build the theorem proving system of my dreams, clearly an applied pursuit, and what I found was the logic of my dreams, which I would rate as rather pure. And I made plenty of errors finding that logic, most of them have their own DOI. I am actually just now writing a book about this logic that corrects these past errors and over complications: http://abstractionlogic.com . Note that the subtitle of the book is "A New Foundation for Reasoning, Computing, and Understanding." At first the subtitle was "A New Foundation for Reasoning and Computing", but then I thought of this blog. :-)

Today is the third (and final) day of the "Second Cosmic Explorer Symposium" ... in which the community of gravitational wave observers tackles the problem of providing "a human face for astrophysical general relativity and cosmology" ... uhh ... along with the problems of ACTUALLY DESIGNING, FUNDING, CONSTRUCTING, and OPERATING the next generation of gravitational wave observatories.

These folks are centralizing their enterprise---centralizing it very effectively---around the question "What foreseeable problems are hard to fix, and tragic if we get them wrong?" (as Matthew J. Evans' presentation felicitously phrased it).

Perhaps it would be no bad thing if the folks pursuing the development of AI in Mathematics centralized more of their discussion(s) around this same question?